Campaigns

Sometimes it can take years to make a single-line code change.

As engineering organizations grow, the problems and their solutions become more intricate. What might have taken an afternoon now takes months of coordinated effort. The system (both the technology and the people) are larger, more complex, and more difficult to change than ever before.

But change is necessary.

We might be looking to pay off some technical debt or tee up an architectural change to unlock a better customer experience, cost savings, etc. What is a tool we can use to coordinate many groups of people, hold groups accountable, and eventually succeed?

Enter what I call the Campaign.

Elements of a Campaign

A Campaign is a long-running effort to enact global change safely within a sociotechnical system.

Every campaign needs the following:

- A Goal

- Metrics toward that goal

- Buy-in

- Method of Accountability

- A “Window”

- A Target Date

Campaigns work well to address:

- Technical changes with large social components.

- Technical changes that require everyone to do a little bit of work.

- High-value or inevitable future worlds

Campaigns don’t work with:

- Efforts that avoid measurement, or where measurement is likely actively harmful. Examples include “We should improve the design of our code.”

- Organizations that cannot buy-in to the effort for one reason or another.

- Low-value Campaigns. No need to coordinate many teams if efforts can be localized.

Many campaigns have a trivial technical goal. My favorite example: The request timeout value is likely one line in a configuration file. Safely changing that to a lower value can be months of work.

Let’s step through the individal components in greater detail.

The Goal

Every campaign needs a goal that can be expressed in a single sentence. The goal should be separate from the metric. It may or may not include implementation details. I usually recommend they include some hint of implementation so that the scope of effort can be limited a bit.

Here are a few examples:

- “We want to improve the resiliency and throughput of our background processes by making all jobs idempotent.”

- “We want to improve the customer experience and resiliency of our system by limiting the amount of time a web request can take.”

- “We want to improve the health of our database by enforcing a timeout on all queries.”

If the goal feels a bit too open, consider adding non-goals as well to keep the effort focused.

Focus on Impact

Before beginning a Campaign, you need to convice a lot of people that this is a valuable effort and that now is the right time to tackle such an effort. A crisp articulation of “Why this Campaign?” and “Why now?” is required.

Write these down, but expect to repeat them in other media often.

Focus on the benefits of achieving the goal. The more we can ground the impact in objective truths (e.g. “our system is more resilient to failure”) and less in personal preference for technology (e.g. “we were using Tech X but now we use Tech Y”), the better.

Metrics

Every Campaign needs a metric or two to enable tracking progress and accountability.

Each metric should be able to be assigned to a given team or individual. Once the metrics go green, we should have ipso facto achieved our goal.1

Choosing a metric can be difficult. It should not be succeptible to Rule Beating, where the metric will be green but the intent of the goal will be missed.

Metrics need to be incremental and as fine-grained as necessary. We should see the slow march of progress, rather than a sudden “Okay, we’re done!”

There should be many ways to achieve a metric. In the example of global request timeouts, we can hit that timeout by reducing the total number of database queries, speeding up serialization of JSON, caching, loading less data, etc.

A few examples derived from the above examples goals:

- Number of background jobs that are not idempotent.

- Endpoints that are over the threshold of request length.

- Database queries that are over the threshold of query length.

Metrics should be easy to grok and able to be sliced a few different ways. In our request threshold example, we might want to surface both “number of endpoints remaining” and “total percentage of endpoints.”

Buy-in

A Campaign by definition reaches across the organization and touches many teams. Every team, mission, individual, and sub-org has different priorities and different worries.

For a Campaign to be successful, it needs global participation of those involved. Buy-in will look different in different organizations, but will generally be a mix of Carrot and Stick (choosing to do something vs. being coerced to do something).

Leading with the benefits (i.e. the Carrot) can go a long way here:

- “If we are able to make jobs idempotent, the Infrastructure team can take over responsibility of background jobs and reduce costs by 90%.”

- “If we are able to make all requests take less than 2 seconds, the customer experience will improve and we will be able to reduce costs.”

The benefits may not be convincing enough or still might not meet the priority threshold for some teams. If many teams do not find the benefits convincing enough, you might not have a Campaign worth pursuing.

A Method of Holding Teams Accountable

How much further do we have to go? Where might we need to invest more?

Most Campaigns will have a central place to answer questions like these. Speadsheets here work just fine. Dashboards are even better. They might be automatically populated or populated by hand, usually with the help of a script. Whatever it is, it should quickly answer a few questions:

- How far along are we?

- Which teams are doing well? (We should follow up with these teams to see what’s working for them.)

- Which teams are falling behind? (We should follow up with these team to see if they need more help, resources, etc.)

This should be passively available, but also circulated on some regular cadence. Weekly, monthly, or quarterly, everyone involved should get an update in their inbox.

A “Window”

The Window is a method of prioritizing work to be done while ensuring that progress is permanent. It moves. It is optional but helpful in most Campaigns.

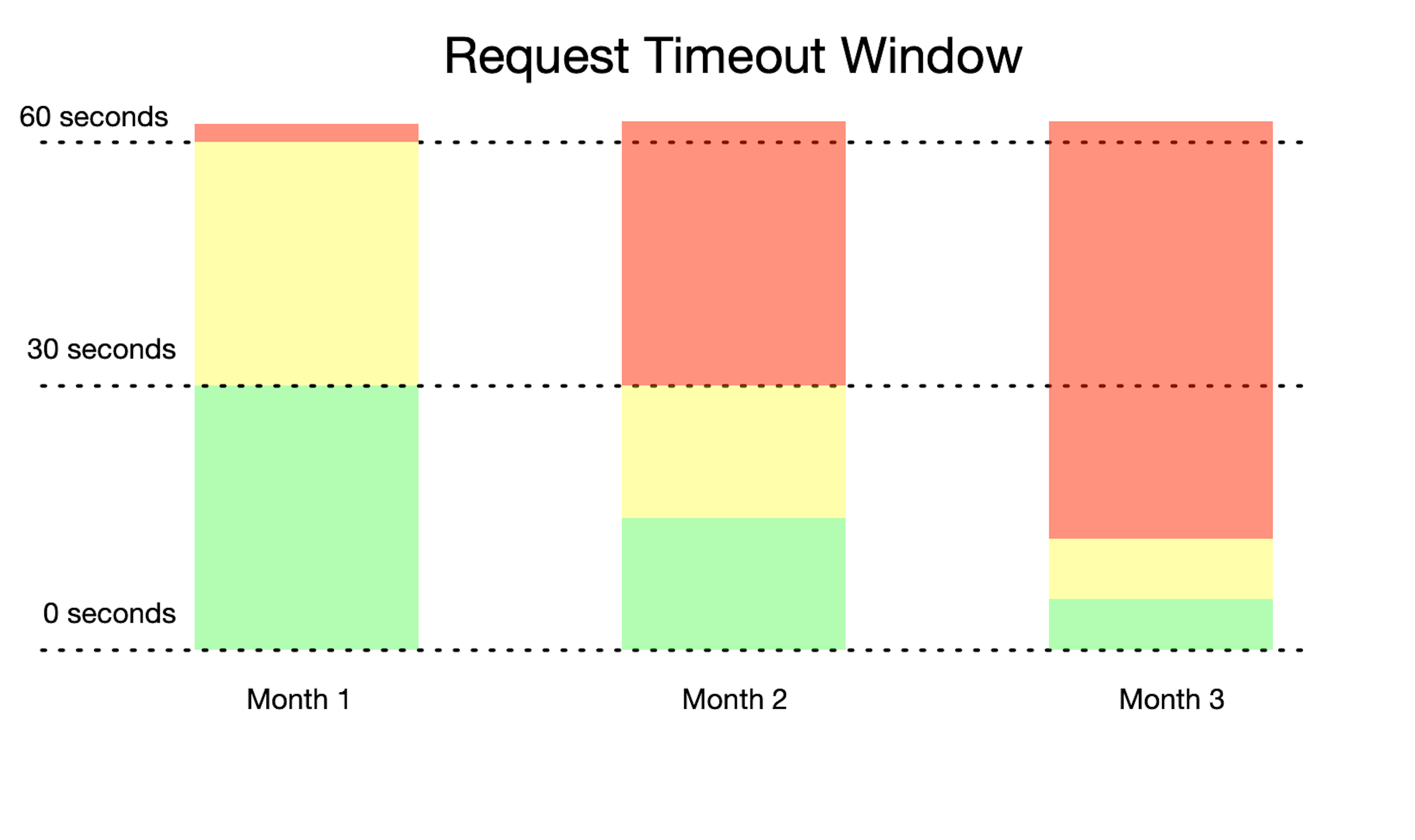

A Window usually defines two threshold: Warning and Not Allowed. In a Campaign to enforce a global request timeout, here’s how the Window might look at the start and evolve:

- The Goal is to make no web request take longer than 5 seconds, 99.9% of the time.

- The Window is set to Warn: 30 seconds, Not Allowed: 60 seconds. 60 seconds is the current limit set by our load balancer and the behavior that exists today.

- Teams tackle all requests that fall between Warn and Not Allowed until there are no more in the Window.

- We move the Window down to Warn: 15 seconds, Not Allowed: 30 seconds.

- GOTO Step 3.

Using this approach yields a few benefits:

- Organizers of the campaign provide a default prioritization approach. Team do not have to develop their own.

- By setting and enforcing “Not Allowed,” we ensure that progress is permanent. A team cannot introduce a new request that takes longer than a given amount of time.

- Teams are warned in advance of a future change. They might receive a weekly email report, an exception, or a Slack message notifying them of something that is okay today but not okay in the future.

- Incremental value is delivered. If we stop the Campaign for whatever reason, lasting value will remain.

An Example: Global Request Timeouts

I’ve threaded an example throughout this post, but let’s look at a specific example compiled together.

One that I’ve seen in a few organizations is the need to set and/or reduce the total amount of time a web request can take. This is the type of thing that can be easy to ignore, especially in applications serving industries with high switching costs or a Principal-agent problem. (High switching costs or principle-agent problem mean a slow degredation of performance will never bubble up as a priority.) Many Campaigns take the form of “If we knew to have this limit in the first place, this would be a lot easier.”

Let’s put it all together.

Further Reading

- “Migrations: The sole scalable fix to tech debt,” Will Lethain.

- Project Management

- Objectives and Key Results

Conclusion

This post is a generalization of an effort I find myself doing more and more at Gusto. Every campaign is a little bit different, and needs to be adapted accordingly.

Hopefully this framework helps you and your teams make complicated technical changes that require large-scale behavioral or process changes.

Special thanks to Toni Rib, Max Stoiber, Danny Zlobinsky, Kito Pastorino, and Shayon Mukherjee for reading early drafts of this post and providing feedback.

-

This is identical to the relationship between Objectives and Key Results. ↩